This is because deepfakes are “not just lies, but ones that betray sight and sound, two of our most innate and cherished senses”. The deception is not simply in the form of false narratives these new deceptions have a visceral quality.

Likewise, the problem of false accounts is not a new problem. “Fake news,” for example, does indeed go one step further than the problem of conflicting interpretation of reality it represents attempts to create conflicting narratives. The influence of “fake news” is rightfully disconcerting, but for all that, fake stories have always been with us, and so their influence is not new.ĭeepfakes, however, go further. And so different people interpreting reality differently is nothing new. But at least our social structures and institutions, and even biological evolutionary development itself, have already accounted for this level of disagreement. There are an indefinite number of reasons for this, and the challenge of conflicting interpretations will never stop. But it is important to note the sense in which this is a genuinely new problem.įor as long as the prefrontal cortex has been around, there have been different legitimate ways to interpret the events around us. Some of the challenges are futuristic, but the advent of deepfake technology forces us to face an imminent one: trust in what is real. A reasonable fear is that the overall effect of deepfakes will be to undermine a “shared sense of reality” that is essential to a healthy democracy.

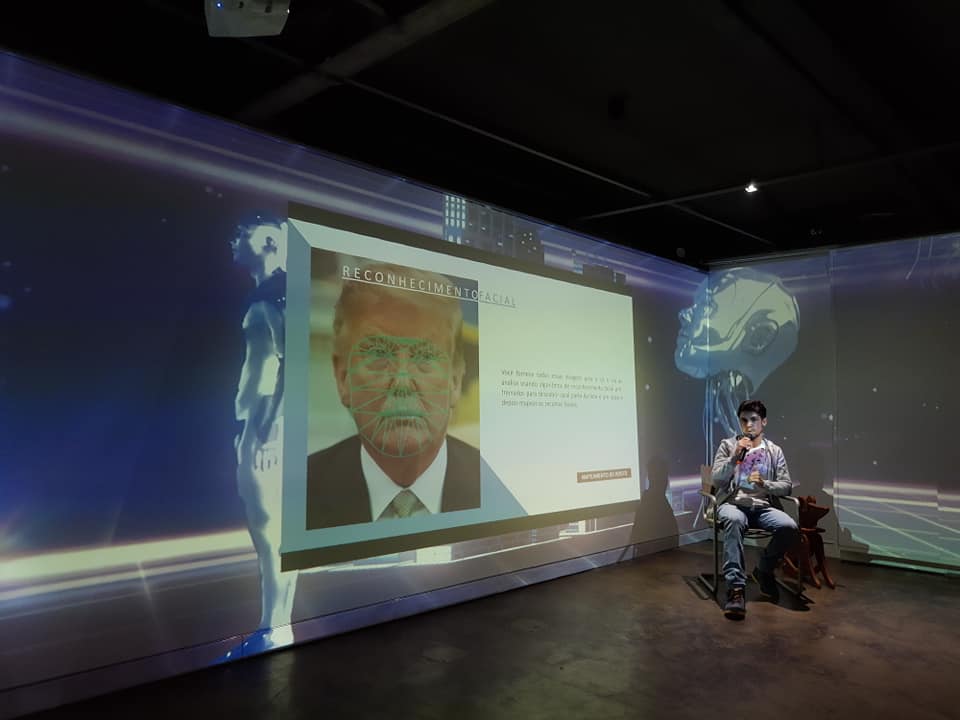

There are several distinct legal and ethical challenges posed by the revolution brought on by artificial intelligence. Despite the fact that President Obama never met Iranian President Rouhani and President Rouhani is still in power, Gosar’s tweet may have had its intended effect. This is in line with another recent data point: the 2016 US presidential election demonstrated that people are drawn to, and share, stories that confirm their political views, even if those stories are false. Research shows that political campaigns are not effective at persuading voters to change their views. Instead, what campaigns can do is reinforce voters’ political orientations and motivate them to vote . On January 6, 2020, Representative Paul Gosar of Arizona tweeted a deepfake photo of Barack Obama with Iranian president Hassan Rouhani with the caption, “The world is a better place without these guys in power,” presumably as a justification of the killing of Iranian General Soleimani. When called out for disseminating a deepfake, Gosar protested that he never actually claimed that the photo was real .Ĭould such blatant gaslighting be effective? Yes. Conversely, another harm of deepfakes is that they can cast doubt on authentic stories . It is chilling to think of the effect they may have on the 2020 US presidential election.Įven if deepfakes are identified and pointed out, that may not matter. An obvious misuse of deepfakes is their potential role in creating more sophisticated fabricated news stories. The personal harms that can be unleashed by deepfakes are limited only by the imaginations of bad actors, but they are dwarfed by the scale of societal harms we may soon experience. Twitter and Pornhub followed suit .Īlthough researchers are striving to create tools to detect deepfakes, the harms will likely be merely slowed, rather than stopped. Samantha Cole wrote several articles that brought attention to the pornographic deepfakes circulating online, and in February 2018 Reddit banned the community of users sharing deepfake videos because they violated Reddit’s policy against the posting of nonconsensual pornography. It didn’t take long before another Reddit user, “ deepfakeapp ,” published FakeApp, an application making it possible for less-tech-savvy computer users to create their own deepfakes. The term comes from the alias of Reddit user “ deepfakes ,” who in late 2017 used open-source machine learning tools to put the faces of Scarlett Johansson, Maisie Williams, and other celebrities on the bodies of women in pornographic videos and then posted the videos on Reddit. A “ deepfake ” is a video or still image of a person that is modified to depict someone else.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed